AI Case Study: Tesla’s Autonomous Vehicles — A Deep Dive Into the Future of AI-Driven Transportation

Artificial intelligence is rewriting how humans travel. While most ai case studies focus on software — chatbots, classroom tools, or content generators — Tesla represents a rare example of AI deployed in the physical world, where the stakes are extremely high.

Tesla’s autonomous vehicle program is not just an artificial intelligence case study. It’s a real-time experiment running on public roads, powered by millions of cars generating billions of miles of learning data.

This article dives deep into how Tesla’s AI works, why it’s different, the unresolved challenges, and what we learn about the direction of AI from this groundbreaking project.

The Problem Tesla Wanted to Solve

Driving is one of the most dangerous human activities.

- 1.3 million deaths per year globally

- 94% of accidents caused by human error

- Distraction, fatigue, emotion, and poor judgment make driving unpredictable

Tesla set out to answer a question:

“Can AI drive better than the average human?”

This sounds simple. But the underlying challenge is enormous. Driving requires:

- Real-time perception

- Prediction of other drivers

- Planning paths

- Following rules

- Handling chaos (construction, bad drivers, mis-marked roads)

No classroom example or ai education case study deals with stakes this high.

Why Traditional Autonomous Approaches Fall Short

Most self-driving competitors (Waymo, Cruise, Zoox) follow a LIDAR-first approach.

LIDAR Pros:

- Very accurate depth

- Good object detection in controlled environments

LIDAR Cons:

- Extremely expensive

- Struggles in heavy weather

- Cannot scale globally

- Not how humans drive

Tesla believes LIDAR-based systems will remain geo-fenced, not universal.

Tesla asks:

If a human can drive with just two eyes, why can’t AI learn the same way?

This vision-based philosophy defines Tesla’s entire architecture.

Tesla’s Core AI Philosophy: “Vision + Neural Networks = Human-Level Driving”

Tesla uses a camera suite + neural networks to interpret the world.

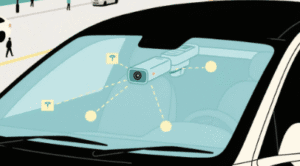

Hardware Suite Includes:

- 8 exterior cameras

- 1 cabin camera

- Radar (removed in newer models)

- Ultrasonics (also removed; replaced with “Tesla Vision”)

Why remove sensors?

Because Tesla wants AI that generalizes, not AI that overfits to perfect sensor conditions.

This is the biggest ideological difference between Tesla and competitors — and what makes Tesla one of the most interesting ai case studies in engineering.

The Autonomy Stack: What Tesla’s AI Actually Does

Tesla’s Full Self-Driving system (FSD) contains five core layers:

1. Perception Layer (Seeing the World)

The neural networks analyze images frame-by-frame to detect:

- lanes

- traffic signals

- road edges

- cars, bikes, trucks, pedestrians

- cones, barricades, potholes

- brake lights, turn signals

- open spaces vs. blocked spaces

Tesla uses 3D occupancy networks to estimate free space around the vehicle.

This is far more advanced than traditional bounding-box detection.

2. Prediction Layer (Guessing Everyone’s Next Move)

Tesla’s AI predicts how each object will move:

- Will that pedestrian cross?

- Will that car cut into my lane?

- Will the cyclist merge?

- Will the vehicle ahead brake?

Prediction is the hardest part — because humans are unpredictable.

3. Planning Layer (Choosing the Safe Path)

The AI evaluates thousands of “possible futures” in milliseconds and selects the safest one.

4. Control Layer (Executing the Path)

FSD controls:

- acceleration

- braking

- steering

- lane changes

- speed adaptation

- merging behavior

5. Feedback Loop (Learning From Mistakes)

Tesla gathers data whenever:

- the AI hesitates

- the human takes over

- the AI misinterprets a situation

This creates the world’s largest driving dataset.

Tesla’s Secret Weapon: Real-World Data

This is where Tesla crushes its competitors.

Tesla Fleet (2025 data)

- Over 4 million cars on the road

- Billions of miles driven under Autopilot/FSD

- Millions of corner cases collected

- Thousands of labeled interventions per week

Where other companies run simulations, Tesla trains on actual human behavior.

This real-world scale makes Tesla one of the most important ai case studies, even outside transportation.

Enter Dojo — Tesla’s Custom AI Supercomputer

To process all that data, Tesla built Dojo, a purpose-built training cluster.

Why Dojo Matters:

- Trains video-based neural networks faster

- Built for Tesla’s specific workloads

- Reduces dependency on NVIDIA GPUs

- Allows Tesla to update FSD models more frequently

- Enables richer perception of 3D environments

Dojo accelerates the AI learning cycle:

more data → faster training → better driving → safer roads

Dojo represents the industrial version of what many ai education case studies attempt to do on a small scale: rapid learning through continuous feedback.

Safety Improvements Through AI

Tesla publishes quarterly safety reports showing:

- Accident rates per mile are lower when Autopilot/FSD is active

- Rear-end collisions especially decrease

- Highway lane-keeping is significantly improved

Even critics agree that AI:

- never gets drunk

- never texts

- never falls asleep

- sees 360°

- reacts in milliseconds

But — Tesla is not perfect.

Disengagements still occur, especially in:

- snowy weather

- poorly marked roads

- chaotic city centers

- unusual intersections

This is expected. AI is not yet superhuman.

But it is consistently improving — and at a scale no competitor can match.

Regulatory, Ethical, and Social Challenges

A realistic artificial intelligence case study must address more than tech.

Regulatory Barriers

- U.S. states have different laws

- Europe demands stricter safety metrics

- Full autonomy approvals remain complex

Ethical Questions

- Who is responsible in an accident?

- Should AI make split-second moral decisions?

- How do we prevent over-reliance by drivers?

Societal Impact

- Professional drivers may be displaced

- Cities may change how they design roads

- Insurance models may shift from driver fault → system fault

Tesla is not just building a product.

It is forcing society to rethink mobility, responsibility, and trust in AI.

What This AI Case Study Teaches the World

Whether you compare Tesla with educational systems, logistics, healthcare, or ai education case studies, the core lessons are identical:

1. AI improves fastest with real-world feedback loops

Tesla proves that massive data + constant iteration beats perfection in isolation.

2. Vision-based AI can surpass expensive sensors

This is a blueprint for future industries.

3. Scaling matters more than early accuracy

Billions of miles matter more than perfect demos.

4. AI solves problems humans struggle to optimize

Driving is chaotic. AI excels at consistency.

5. The future is end-to-end AI systems

Tesla is transitioning from hand-coded logic → pure neural networks.

6. True disruption happens when AI meets physical infrastructure

Cars, factories, energy — these create new categories of AI transformation.

Internal Link Suggestions

- [Internal Link: “Read our breakdown of AlphaFold — AI’s biggest science breakthrough.”]

External Links

- [External Link: “Official Tesla FSD Overview”]

- [External Link: “MIT Deep Learning for Self-Driving Cars Lab”]

- [External Link: “NHTSA Reports on Autonomous Vehicle Safety”]